Unlocking the Power of k-Nearest Neighbors: A Comprehensive Python Project for High-Performing Classification

Dive into the world of machine learning with our step-by-step guide on implementing a k-Nearest Neighbors Classifier (k-NN-C) from scratch in Python. In this comprehensive project write-up, we’ll explore the following topics:

- An overview of the Iris Dataset and its attributes

- The inner workings of the k-NN-C model as a lazy learning algorithm

- The importance of cross-validation and its application in our project

- Calculating the Euclidean distance and generating accurate predictions

- Evaluating the model and incorporating user input for real-time predictions

Join us on this exciting journey as we demystify the process of building a k-NN classifier from scratch and discover its potential in real-world applications. Whether you’re a seasoned data scientist or just starting your adventure in artificial intelligence, this project write-up offers valuable insights and practical guidance for developing a high-performing k-NN classifier.

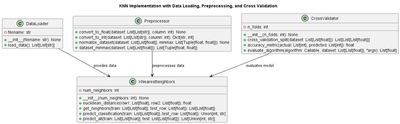

Project Components and Classes

In this project, we have developed several classes to facilitate the implementation of the k-NN-C model. These classes include:

- KNearestNeighbors: The core class implementing the k-NN-C algorithm.

- DataLoader: A class responsible for loading and parsing the Iris Dataset.

- Preprocessor: A class that handles data preprocessing tasks, such as feature normalization and dataset shuffling.

- CrossValidator: A class that manages the cross-validation process for model evaluation.

These classes are designed to be modular and reusable, allowing you to adapt the code to various other machine learning problems with ease.

Class and Sequence Diagrams

Explore the structure and interactions of the various classes in our k-NN classifier project with the class and sequence diagrams.

GitHub Repository Access

To access the complete project files and source code, visit our GitHub repository:

Feel free to explore, clone, or fork the repository to experiment with the code and make your own improvements.

Interactive Document Preview

Dive into the K-NN Classifier project write-up with our interactive document preview. Feel free to zoom in, scroll, and navigate through the content.

Downloads

Download the project write-up in PDF format for offline reading or printing.

Conclusion

In this project, we have built a k-Nearest Neighbors Classifier from scratch in Python, achieving an impressive 96.67% accuracy on the Iris Dataset using 5-fold cross-validation and the model’s number of neighbors set to 10. By understanding the k-NN-C model’s inner workings and implementing it from the ground up, we have gained valuable insights into the algorithm’s capabilities and potential real-world applications. We encourage you to experiment with the code provided in the GitHub repository and explore the possibilities of using the k-NN-C model for other classification tasks.

Join the Discussion

We’d love to hear your thoughts, questions, and experiences related to this k-NN classifier project! Feel free to join the conversation in our Disqus forum below. Share your insights, ask questions, and connect with like-minded individuals who are passionate about machine learning and artificial intelligence.

Don’t hesitate to contribute your ideas or ask for help; we’re all here to learn and grow together. Let’s build a thriving community where we can discuss, learn, and explore the fascinating world of AI and machine learning!